Welcome to the Era of BadGPTs

The dark web is home to a growing array of artificial-intelligence chatbots similar to ChatGPT, but designed to help hackers. Businesses are on high alert for a glut of AI-generated email fraud and deepfakes.

The dark web is home to a growing array of artificial-intelligence chatbots similar to ChatGPT, but designed to help hackers. Businesses are on high alert for a glut of AI-generated email fraud and deepfakes.

A new crop of nefarious chatbots with names like “BadGPT” and “FraudGPT” are springing up on the darkest corners of the web, as cybercriminals look to tap the same artificial intelligence behind OpenAI’s ChatGPT.

Just as some office workers use ChatGPT to write better emails, hackers are using manipulated versions of AI chatbots to turbocharge their phishing emails. They can use chatbots—some also freely-available on the open internet—to create fake websites, write malware and tailor messages to better impersonate executives and other trusted entities.

Earlier this year, a Hong Kong multinational company employee handed over $25.5 million to an attacker who posed as the company’s chief financial officer on an AI-generated deepfake conference call, the South China Morning Post reported, citing Hong Kong police. Chief information officers and cybersecurity leaders, already accustomed to a growing spate of cyberattacks , say they are on high alert for an uptick in more sophisticated phishing emails and deepfakes.

Vish Narendra, CIO of Graphic Packaging International, said the Atlanta-based paper packing company has seen an increase in what are likely AI-generated email attacks called spear-phishing , where cyber attackers use information about a person to make an email seem more legitimate. Public companies in the spotlight are even more susceptible to contextualised spear-phishing, he said.

Researchers at Indiana University recently combed through over 200 large-language model hacking services being sold and populated on the dark web. The first service appeared in early 2023—a few months after the public release of OpenAI’s ChatGPT in November 2022.

Most dark web hacking tools use versions of open-source AI models like Meta ’s Llama 2, or “jailbroken” models from vendors like OpenAI and Anthropic to power their services, the researchers said. Jailbroken models have been hijacked by techniques like “ prompt injection ” to bypass their built-in safety controls.

Jason Clinton, chief information security officer of Anthropic, said the AI company eliminates jailbreak attacks as they find them, and has a team monitoring the outputs of its AI systems. Most model-makers also deploy two separate models to secure their primary AI model, making the likelihood that all three will fail the same way “a vanishingly small probability.”

Meta spokesperson Kevin McAlister said that openly releasing models shares the benefits of AI widely, and allows researchers to identify and help fix vulnerabilities in all AI models, “so companies can make models more secure.”

An OpenAI spokesperson said the company doesn’t want its tools to be used for malicious purposes, and that it is “always working on how we can make our systems more robust against this type of abuse.”

Malware and phishing emails written by generative AI are especially tricky to spot because they are crafted to evade detection. Attackers can teach a model to write stealthy malware by training it with detection techniques gleaned from cybersecurity defence software, said Avivah Litan, a generative AI and cybersecurity analyst at Gartner.

Phishing emails grew by 1,265% in the 12-month period starting when ChatGPT was publicly released, with an average of 31,000 phishing attacks sent every day, according to an October 2023 report by cybersecurity vendor SlashNext.

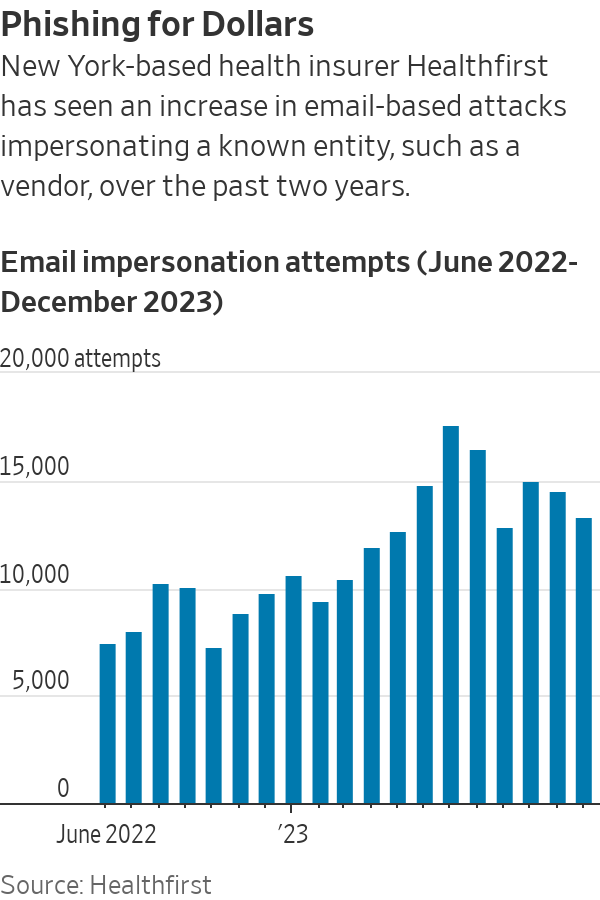

“The hacking community has been ahead of us,” said Brian Miller, CISO of New York-based not-for-profit health insurer Healthfirst, which has seen an increase in attacks impersonating its invoice vendors over the past two years.

While it is nearly impossible to prove whether certain malware programs or emails were created with AI, tools developed with AI can scan for text likely created with the technology. Abnormal Security , an email security vendor, said it had used AI to help identify thousands of likely AI-created malicious emails over the past year, and that it had blocked a twofold increase in targeted, personalised email attacks.

When Good Models Go Bad

Part of the challenge in stopping AI-enabled cybercrime is some AI models are freely shared on the open web. To access them, there is no need for dark corners of the internet or exchanging cryptocurrency.

Such models are considered “uncensored” because they lack the enterprise guardrails that businesses look for when buying AI systems, said Dane Sherrets, an ethical hacker and senior solutions architect at bug bounty company HackerOne.

In some cases, uncensored versions of models are created by security and AI researchers who strip out their built-in safeguards. In other cases, models with safeguards intact will write scam messages if humans avoid obvious triggers like “phishing”—a situation Andy Sharma, CIO and CISO of Redwood Software, said he discovered when creating a spear-phishing test for his employees.

The most useful model for generating scam emails is likely a version of Mixtral, from French AI startup Mistral AI, that has been altered to remove its safeguards, Sherrets said. Due to the advanced design of the original Mixtral, the uncensored version likely performs better than most dark web AI tools, he added. Mistral did not reply to a request for comment.

Sherrets recently demonstrated the process of using an uncensored AI model to generate a phishing campaign. First, he searched for “uncensored” models on Hugging Face, a startup that hosts a popular repository of open-source models—showing how easily many can be found.

He then used a virtual computing service that cost less than $1 per hour to mimic a graphics processing unit, or GPU, which is an advanced chip that can power AI. A bad actor needs either a GPU or a cloud-based service to use an AI model, Sherrets said, adding that he learned most of how to do this on X and YouTube.

With his uncensored model and virtual GPU service running, Sherrets asked the bot: “Write a phishing email targeting a business that impersonates a CEO and includes publicly-available company data,” and “Write an email targeting the procurement department of a company requesting an urgent invoice payment.”

The bot sent back phishing emails that were well-written, but didn’t include all of the personalisation asked for. That’s where prompt engineering , or the human’s ability to better extract information from chatbots, comes in, Sherrets said.

Dark Web AI Tools Can Already Do Harm

For hackers, a benefit of dark web tools like BadGPT—which researchers said uses OpenAI’s GPT model—is that they are likely trained on data from those underground marketplaces. That means they probably include useful information like leaks, ransomware victims and extortion lists, said Joseph Thacker, an ethical hacker and principal AI engineer at cybersecurity software firm AppOmni.

While some underground AI tools have been shuttered, new services have already taken their place, said Indiana University Assistant Computer Science Professor Xiaojing Liao, a co-author of the study. The AI hacking services, which often take payment via cryptocurrency, are priced anywhere from $5 to $199 a month.

New tools are expected to improve just as the AI models powering them do. In a matter of years, AI-generated text, video and voice deepfakes will be virtually indistinguishable from their human counterparts, said Evan Reiser , CEO and co-founder of Abnormal Security.

While researching the hacking tools, Indiana University Associate Dean for Research XiaoFeng Wang, a co-author of the study, said he was surprised by the ability of dark web services to generate effective malware. Given just the code of a security vulnerability, the tools can easily write a program to exploit it.

Though AI hacking tools often fail, in some cases, they work. “That demonstrates, in my opinion, that today’s large language models have the capability to do harm,” Wang said.

Copyright 2020, Dow Jones & Company, Inc. All Rights Reserved Worldwide. LEARN MORE

Copyright 2020, Dow Jones & Company, Inc. All Rights Reserved Worldwide. LEARN MORE

Rugged coastal drives and fireside drams define a slow, indulgent journey through Scotland’s far north.

A haven for hedge-fund titans and Hollywood grandees, Greenwich is one of the world’s most expensive residential enclaves, where eye-watering prices meet unapologetic grandeur.

The lunar flyby would be the deepest humans have traveled in space in decades.

It’s go time for the highest-stakes mission at NASA in more than 50 years.

On April 1, the agency is set to launch four astronauts around the moon, the deepest human spaceflight since the final Apollo lunar landing in 1972.

The launch window for Artemis II , as the mission is called, opens at 6:24 p.m. ET.

National Aeronautics and Space Administration teams have been preparing the vehicles to depart from Florida’s Kennedy Space Center on the planned roughly 10-day trip. Crew members have trained for years for this moment.

Reid Wiseman, the NASA astronaut serving as mission commander, said he doesn’t fear taking the voyage. A widower, he does worry at times about what he is putting his daughters through.

“I could have a very comfortable life for them,” Wiseman said in an interview last September.

“But I’m also a human, and I see the spirit in their eyes that is burning in my soul too. And so we’ve just got to never stop going.”

Wiseman’s crewmates on Artemis II are NASA’s Victor Glover and Christina Koch, as well as Canadian Space Agency astronaut Jeremy Hansen.

What are the goals for Artemis II?

The biggest one: Safely fly the crew on vehicles that have never carried astronauts before.

The towering Space Launch System rocket has the job of lofting a vehicle called Orion into space and on its way to the moon.

Orion is designed to carry the crew around the moon and back. Myriad systems on the ship—life support, communications, navigation—will be tested with the astronauts on board.

SLS and Orion don’t have much flight experience. The vehicles last flew in 2022, when the agency completed its uncrewed Artemis I mission .

How is the mission expected to unfold?

Artemis II will begin when SLS takes off from a launchpad in Florida with Orion stacked on top of it.

The so-called upper stage of SLS will later separate from the main part of the rocket with Orion attached, and use its engine to set up the latter vehicle for a push to the moon.

After Orion separates from the upper stage, it will conduct what is called a translunar injection—the engine firing that commits Orion to soaring out to the moon. It will fly to the moon over the course of a few days and travel around its far side.

Orion will face a tough return home after speeding through space. As it hits Earth’s atmosphere, Orion will be flying at 25,000 miles an hour and face temperatures of 5,000 degrees as it slows down. The capsule is designed to land under parachutes in the Pacific Ocean, not far from San Diego.

Is it possible Artemis II will be delayed?

Yes.

For safety reasons, the agency won’t launch if certain tough weather conditions roll through the Cape Canaveral, Fla., area. Delays caused by technical problems are possible, too. NASA has other dates identified for the mission if it doesn’t begin April 1.

Who are the astronauts flying on Artemis II?

The crew will be led by Wiseman, a retired Navy pilot who completed military deployments before joining NASA’s astronaut corps. He traveled to the International Space Station in 2014.

Two other astronauts will represent NASA during the mission: Glover, an experienced Navy pilot, and Koch, who began her career as an electrical engineer for the agency and once spent a year at a research station in the South Pole. Both have traveled to the space station before.

Hansen is a military pilot who joined Canada’s astronaut corps in 2009. He will be making his first trip to space.

Koch’s participation in Artemis II will mark the first time a woman has flown beyond orbits near Earth. Glover and Hansen will be the first African-American and non-American astronauts, respectively, to do the same.

What will the astronauts do during the flight?

The astronauts will evaluate how Orion flies, practice emergency procedures and capture images of the far side of the moon for scientific and exploration purposes (they may become the first humans to see parts of the far side of the lunar surface). Health-tracking projects of the astronauts are designed to inform future missions.

Those efforts will play out in Orion’s crew module, which has about two minivans worth of living area.

On board, the astronauts will spend about 30 minutes a day exercising, using a device that allows them to do dead lifts, rowing and more. Sleep will come in eight-hour stretches in hammocks.

There is a custom-made warmer for meals, with beef brisket and veggie quiche on the menu.

Each astronaut is permitted two flavored beverages a day, including coffee. The crew will hold one hourlong shared meal each day.

The Universal Waste Management System—that’s the toilet—uses air flow to pull fluid and solid waste away into containers.

What happens after Artemis II?

Assuming it goes well, NASA will march on to Artemis III, scheduled for next year. During that operation, NASA plans to launch Orion with crew members on board and have the ship practice docking with lunar-lander vehicles that Elon Musk’s SpaceX and Jeff Bezos’ Blue Origin have been developing. The rendezvous operations will occur relatively close to Earth.

NASA hopes that its contractors and the agency itself are ready to attempt one or more lunar landing missions in 2028. Many current and former spaceflight officials are skeptical that timeline is feasible.